#!/usr/bin/env python3

"""3-month sales & marketing audit for Nitrous Competitions.

Inputs: orders_3mo.csv (from flatten.py)

Outputs: AUDIT_REPORT.md, AUDIT_REPORT.html, charts/*.png, summary CSVs

"""

import os, csv, math

from collections import defaultdict, Counter

from datetime import datetime, timedelta

CSV_IN = "/home/sol1/Desktop/MARKETING/nitrous_3mo/orders_3mo.csv"

OUT_DIR = "/home/sol1/Desktop/MARKETING/nitrous_3mo"

CHART_DIR = os.path.join(OUT_DIR, "charts")

os.makedirs(CHART_DIR, exist_ok=True)

DOW = ["Monday","Tuesday","Wednesday","Thursday","Friday","Saturday","Sunday"]

def parse_dt(s):

if not s:

return None

try:

return datetime.fromisoformat(s.replace("Z",""))

except Exception:

return None

def fnum(n):

return f"{n:,.0f}" if isinstance(n,(int,float)) else str(n)

def fmoney(n):

return f"£{n:,.2f}" if isinstance(n,(int,float)) else str(n)

def classify_source(s, st, ref):

s = (s or "").strip().lower()

st = (st or "").strip().lower()

ref = (ref or "").strip().lower()

if not s or s in ("(none)","(direct)","direct","typein"):

return "Direct / Typein"

if "google" in s or "google" in ref:

if "ad" in s or "cpc" in s or "gclid" in ref or "googleads" in ref:

return "Google Ads"

return "Google Organic"

if "facebook" in s or "facebook" in ref or s == "fb" or "fbclid" in ref:

return "Facebook"

if "instagram" in s or "instagram" in ref or s == "ig":

return "Instagram"

if "tiktok" in s or "tiktok" in ref:

return "TikTok"

if "youtube" in s or "youtube" in ref:

return "YouTube"

if "twitter" in s or "x.com" in s or "x.com" in ref:

return "Twitter/X"

if "snapchat" in s or "snapchat" in ref:

return "Snapchat"

if "reddit" in s or "reddit" in ref:

return "Reddit"

if "bing" in s or "bing" in ref:

return "Bing"

if "klaviyo" in s or "email" in s or "newsletter" in s or "klaviyo" in ref:

return "Email"

if "sms" in s:

return "SMS"

if "affiliate" in s or "affiliate" in ref:

return "Affiliate"

if s.startswith("http") or "/" in s:

return f"Referral: {s[:40]}"

if st == "referral":

return f"Referral: {ref[:40]}"

if st == "organic":

return "Organic Search"

if st == "utm":

return f"Campaign: {s[:30]}"

return s.title()[:40] or "Unknown"

def main():

rows = []

with open(CSV_IN, newline="", encoding="utf-8") as f:

r = csv.DictReader(f)

for row in r:

try:

row["_total"] = float(row.get("total") or 0)

except Exception:

row["_total"] = 0.0

row["_dt"] = parse_dt(row.get("date_created",""))

row["_paid_dt"] = parse_dt(row.get("date_paid","")) or row["_dt"]

row["_channel"] = classify_source(row.get("traffic_source"), row.get("source_type"), row.get("referrer"))

rows.append(row)

rows = [r for r in rows if r["_dt"]]

print(f"Loaded {len(rows):,} orders")

total_orders = len(rows)

total_revenue = sum(r["_total"] for r in rows)

aov = total_revenue / total_orders if total_orders else 0

unique_customers = len({r["email"] for r in rows if r["email"]})

new_orders = sum(1 for r in rows if r["new_or_repeat"]=="new")

repeat_orders = total_orders - new_orders

# ---- Channel breakdown ----

ch_orders = Counter()

ch_revenue = defaultdict(float)

ch_unique = defaultdict(set)

for r in rows:

ch = r["_channel"]

ch_orders[ch] += 1

ch_revenue[ch] += r["_total"]

if r["email"]:

ch_unique[ch].add(r["email"])

# ---- Payment method ----

pm_orders = Counter()

pm_revenue = defaultdict(float)

for r in rows:

pm = r.get("payment_method_title") or r.get("payment_method") or "(none)"

pm_orders[pm] += 1

pm_revenue[pm] += r["_total"]

# ---- Hour heatmap ----

hour_orders = Counter()

hour_revenue = defaultdict(float)

for r in rows:

h = r["_dt"].hour

hour_orders[h] += 1

hour_revenue[h] += r["_total"]

# ---- Day-of-week ----

dow_orders = Counter()

dow_revenue = defaultdict(float)

for r in rows:

d = r["_dt"].weekday()

dow_orders[d] += 1

dow_revenue[d] += r["_total"]

# ---- Daily timeline ----

day_orders = Counter()

day_revenue = defaultdict(float)

day_new = Counter()

for r in rows:

d = r["_dt"].date().isoformat()

day_orders[d] += 1

day_revenue[d] += r["_total"]

if r["new_or_repeat"]=="new":

day_new[d] += 1

# ---- Country / city ----

country_orders = Counter(r.get("billing_country") or "??" for r in rows)

city_revenue = defaultdict(float)

city_orders = Counter()

for r in rows:

c = (r.get("billing_city") or "Unknown").title().strip()

city_orders[c] += 1

city_revenue[c] += r["_total"]

# ---- Top customers (LTV in window) ----

cust_rev = defaultdict(float)

cust_orders = Counter()

for r in rows:

e = r["email"]

if not e:

continue

cust_rev[e] += r["_total"]

cust_orders[e] += 1

# ---- Hourly heat map by channel (top 6 channels) ----

top_channels = [c for c,_ in ch_revenue.items()]

top_channels.sort(key=lambda c: ch_revenue[c], reverse=True)

top_channels = top_channels[:8]

# ---- Charts ----

try:

import matplotlib

matplotlib.use("Agg")

import matplotlib.pyplot as plt

# Channel revenue bar

plt.figure(figsize=(12,6))

items = sorted(ch_revenue.items(), key=lambda x:-x[1])[:15]

plt.bar([k for k,_ in items], [v for _,v in items], color="#2563eb")

plt.xticks(rotation=45, ha="right")

plt.ylabel("Revenue (£)")

plt.title("Top 15 Traffic Sources by Revenue (3 months)")

plt.tight_layout()

plt.savefig(os.path.join(CHART_DIR,"channel_revenue.png"), dpi=140)

plt.close()

# Hour heatmap

plt.figure(figsize=(12,5))

hrs = list(range(24))

plt.bar(hrs, [hour_revenue[h] for h in hrs], color="#dc2626")

plt.xlabel("Hour of day (24h)")

plt.ylabel("Revenue (£)")

plt.title("Revenue by Hour of Day")

plt.xticks(hrs)

plt.tight_layout()

plt.savefig(os.path.join(CHART_DIR,"hour_revenue.png"), dpi=140)

plt.close()

# DOW

plt.figure(figsize=(10,5))

plt.bar(DOW, [dow_revenue[i] for i in range(7)], color="#16a34a")

plt.ylabel("Revenue (£)")

plt.title("Revenue by Day of Week")

plt.tight_layout()

plt.savefig(os.path.join(CHART_DIR,"dow_revenue.png"), dpi=140)

plt.close()

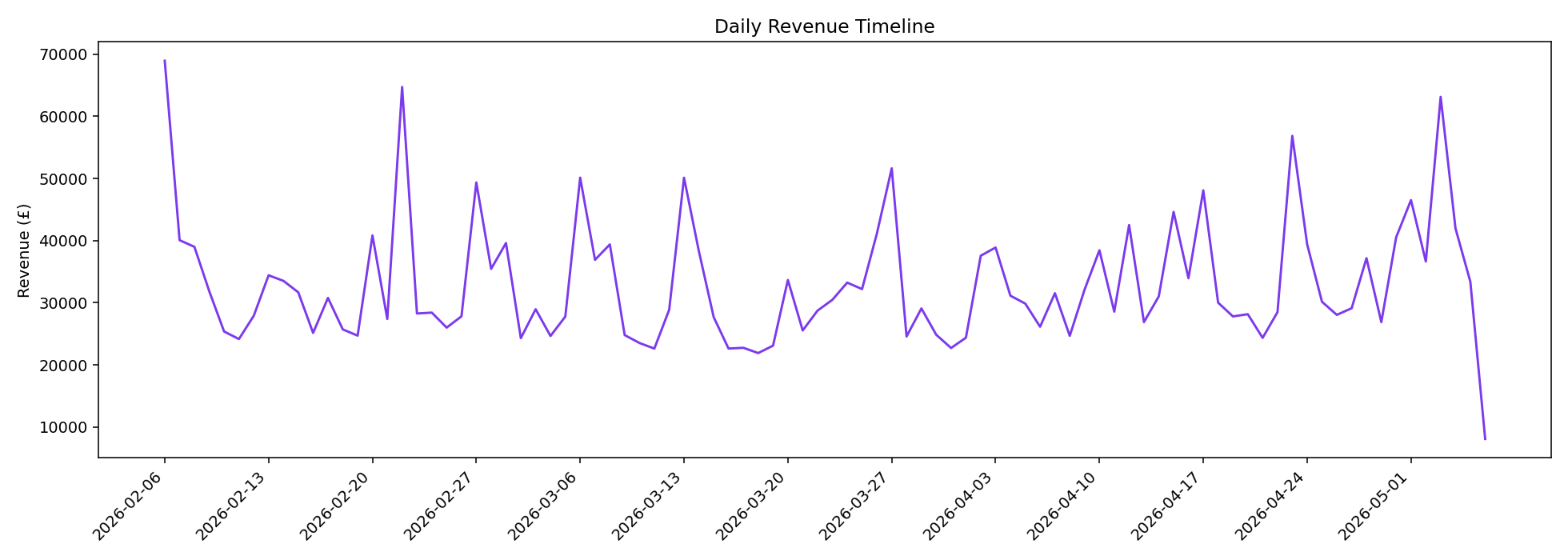

# Daily timeline

plt.figure(figsize=(14,5))

ds = sorted(day_revenue.keys())

plt.plot(ds, [day_revenue[d] for d in ds], color="#7c3aed")

plt.xticks(ds[::7], rotation=45, ha="right")

plt.ylabel("Revenue (£)")

plt.title("Daily Revenue Timeline")

plt.tight_layout()

plt.savefig(os.path.join(CHART_DIR,"daily_revenue.png"), dpi=140)

plt.close()

# Payment method

plt.figure(figsize=(10,6))

items = sorted(pm_orders.items(), key=lambda x:-x[1])

plt.pie([v for _,v in items], labels=[k for k,_ in items], autopct="%1.1f%%", startangle=90)

plt.title("Order Share by Payment Method")

plt.tight_layout()

plt.savefig(os.path.join(CHART_DIR,"payment_method.png"), dpi=140)

plt.close()

except Exception as e:

print("matplotlib failed:", e)

# ---- Markdown report ----

days_in_window = max(1,(max(r["_dt"] for r in rows) - min(r["_dt"] for r in rows)).days + 1)

start = min(r["_dt"] for r in rows).date()

end = max(r["_dt"] for r in rows).date()

md = []

md.append(f"# Nitrous Competitions — 3-Month Marketing & Sales Audit")

md.append(f"**Window:** {start} → {end} ({days_in_window} days)")

md.append(f"**Source:** WooCommerce REST API `/wp-json/wc/v3/orders` — `status=completed`")

md.append(f"**Generated:** {datetime.utcnow().strftime('%Y-%m-%d %H:%M UTC')}")

md.append("")

md.append("## Executive Summary")

md.append("")

md.append(f"| Metric | Value |")

md.append(f"|---|---|")

md.append(f"| Total completed orders | **{fnum(total_orders)}** |")

md.append(f"| Total revenue | **{fmoney(total_revenue)}** |")

md.append(f"| Average order value (AOV) | {fmoney(aov)} |")

md.append(f"| Unique paying customers | {fnum(unique_customers)} |")

md.append(f"| New-customer orders | {fnum(new_orders)} ({100*new_orders/total_orders:.1f}%) |")

md.append(f"| Repeat-customer orders | {fnum(repeat_orders)} ({100*repeat_orders/total_orders:.1f}%) |")

md.append(f"| Orders / day (avg) | {total_orders/days_in_window:,.0f} |")

md.append(f"| Revenue / day (avg) | {fmoney(total_revenue/days_in_window)} |")

md.append(f"| Orders / customer | {total_orders/max(1,unique_customers):.2f} |")

md.append(f"| Revenue / customer | {fmoney(total_revenue/max(1,unique_customers))} |")

md.append("")

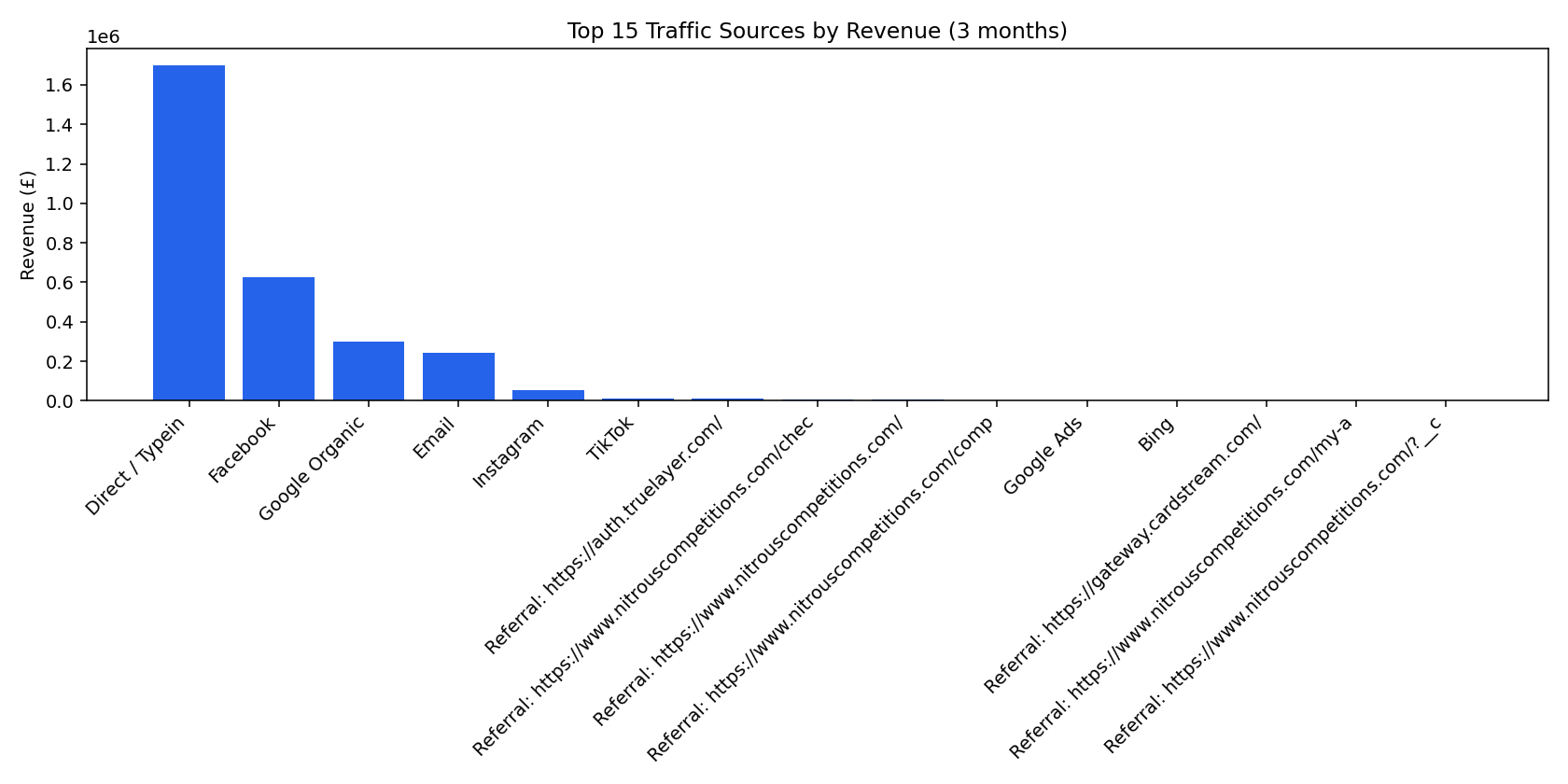

md.append("## 1. Where the sales come from (traffic source)")

md.append("")

md.append("| Channel | Orders | % | Revenue | % | Unique buyers | AOV |")

md.append("|---|--:|--:|--:|--:|--:|--:|")

for ch, rev in sorted(ch_revenue.items(), key=lambda x:-x[1])[:30]:

n = ch_orders[ch]

u = len(ch_unique[ch])

md.append(f"| {ch} | {fnum(n)} | {100*n/total_orders:.1f}% | {fmoney(rev)} | {100*rev/total_revenue:.1f}% | {fnum(u)} | {fmoney(rev/n) if n else '£0'} |")

md.append("")

md.append("")

md.append("")

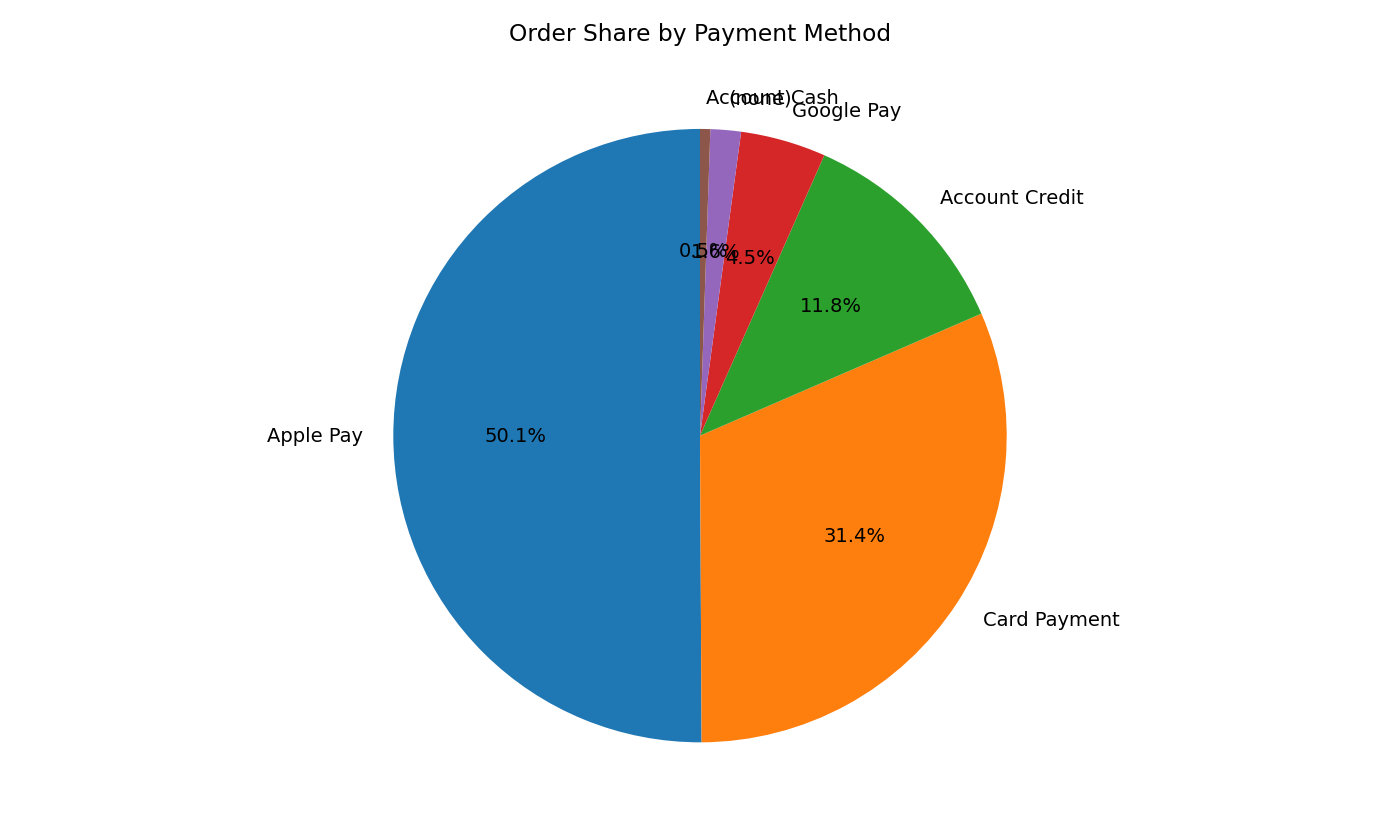

md.append("## 2. Payment methods")

md.append("")

md.append("| Method | Orders | % | Revenue | % |")

md.append("|---|--:|--:|--:|--:|")

for pm, n in pm_orders.most_common():

rev = pm_revenue[pm]

md.append(f"| {pm} | {fnum(n)} | {100*n/total_orders:.1f}% | {fmoney(rev)} | {100*rev/total_revenue:.1f}% |")

md.append("")

md.append("")

md.append("")

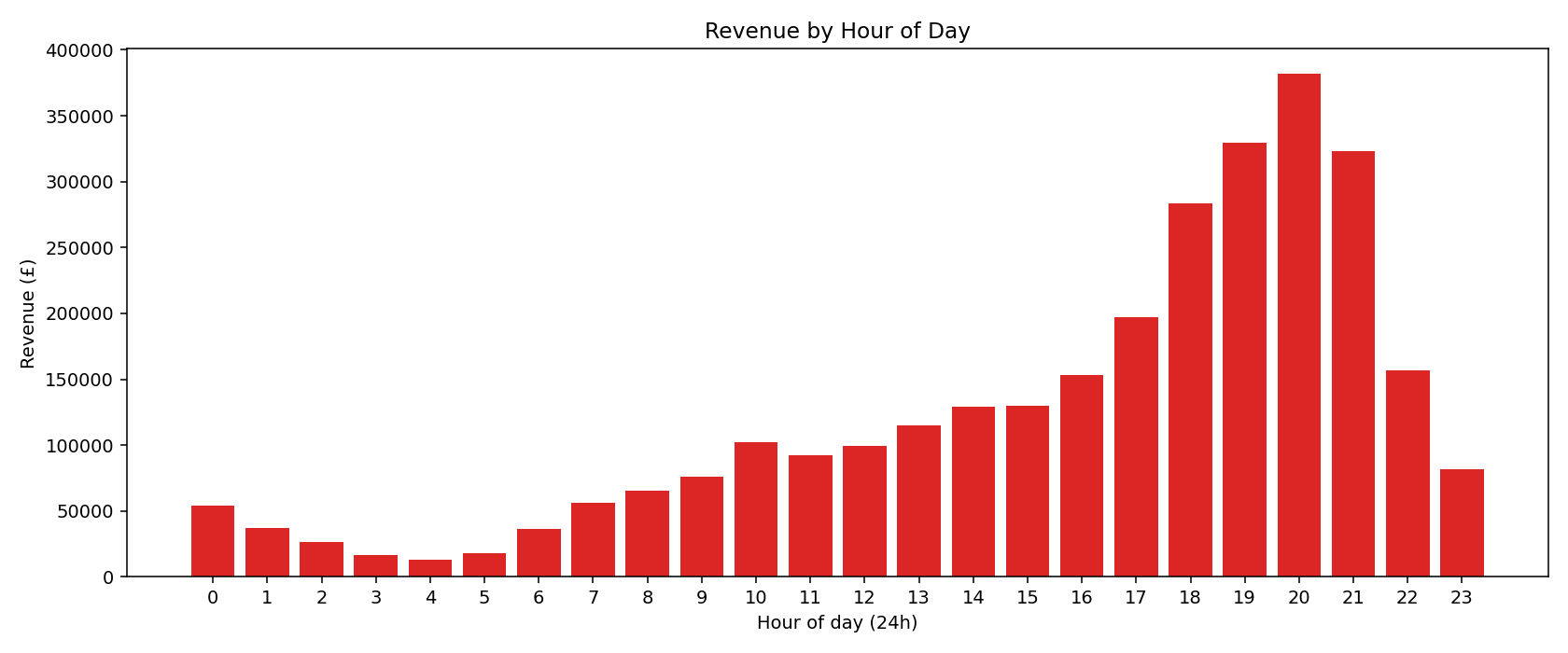

md.append("## 3. Hourly heat map (when buyers buy)")

md.append("")

md.append("| Hour | Orders | % | Revenue | Intensity |")

md.append("|---|--:|--:|--:|---|")

max_h = max(hour_revenue.values()) or 1

for h in range(24):

n = hour_orders[h]; rev = hour_revenue[h]

bars = "█" * int(round(10 * rev / max_h))

md.append(f"| {h:02d}:00 | {fnum(n)} | {100*n/total_orders:.1f}% | {fmoney(rev)} | `{bars:<10}` {100*rev/max_h:.0f}% |")

md.append("")

md.append("")

md.append("")

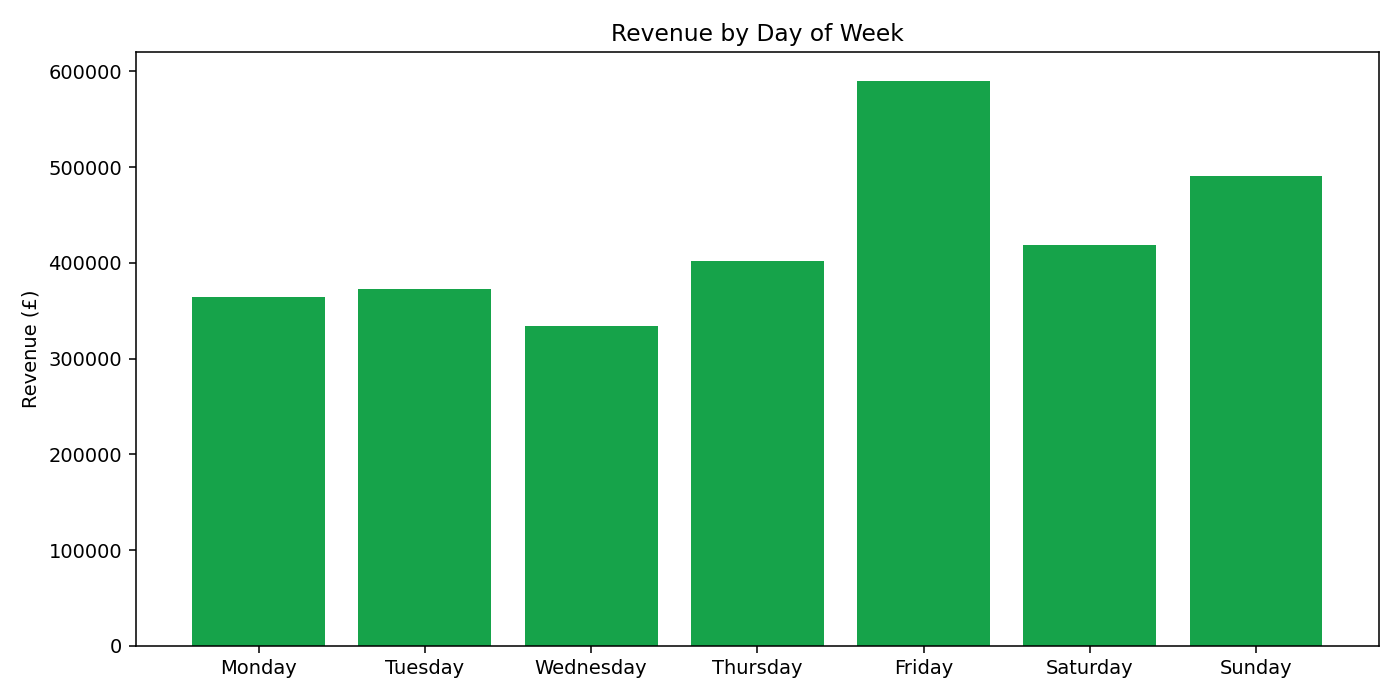

md.append("## 4. Day-of-week heat map")

md.append("")

md.append("| Day | Orders | % | Revenue | Intensity |")

md.append("|---|--:|--:|--:|---|")

max_d = max(dow_revenue.values()) or 1

for i in range(7):

n = dow_orders[i]; rev = dow_revenue[i]

bars = "█" * int(round(10 * rev / max_d))

md.append(f"| {DOW[i]} | {fnum(n)} | {100*n/total_orders:.1f}% | {fmoney(rev)} | `{bars:<10}` {100*rev/max_d:.0f}% |")

md.append("")

md.append("")

md.append("")

md.append("## 5. Daily revenue timeline")

md.append("")

md.append("")

md.append("")

# Top 10 best & worst days

sorted_days = sorted(day_revenue.items(), key=lambda x:-x[1])

md.append("**Top 10 days by revenue**")

md.append("")

md.append("| Date | Day | Orders | Revenue |")

md.append("|---|---|--:|--:|")

for d, rev in sorted_days[:10]:

dt = datetime.fromisoformat(d)

md.append(f"| {d} | {DOW[dt.weekday()]} | {fnum(day_orders[d])} | {fmoney(rev)} |")

md.append("")

md.append("**Bottom 10 days by revenue**")

md.append("")

md.append("| Date | Day | Orders | Revenue |")

md.append("|---|---|--:|--:|")

for d, rev in sorted_days[-10:]:

dt = datetime.fromisoformat(d)

md.append(f"| {d} | {DOW[dt.weekday()]} | {fnum(day_orders[d])} | {fmoney(rev)} |")

md.append("")

md.append("## 6. New vs repeat customers")

md.append("")

md.append(f"- **New buyers (first order in window):** {fnum(unique_customers)}")

md.append(f"- **Orders from new buyers:** {fnum(new_orders)} ({100*new_orders/total_orders:.1f}%)")

md.append(f"- **Repeat orders:** {fnum(repeat_orders)} ({100*repeat_orders/total_orders:.1f}%)")

md.append("")

# Customer LTV bands

bands = [(0,5),(5,25),(25,100),(100,500),(500,5000),(5000,1e9)]

band_count = [0]*len(bands); band_rev = [0.0]*len(bands)

for e, rev in cust_rev.items():

for i,(lo,hi) in enumerate(bands):

if lo <= rev < hi:

band_count[i]+=1; band_rev[i]+=rev; break

md.append("**Customer revenue bands (3-month LTV)**")

md.append("")

md.append("| Spend band | Customers | % | Revenue | % |")

md.append("|---|--:|--:|--:|--:|")

for i,(lo,hi) in enumerate(bands):

label = f"£{lo:,}–£{hi:,}" if hi < 1e9 else f"£{lo:,}+"

n = band_count[i]; rev = band_rev[i]

md.append(f"| {label} | {fnum(n)} | {100*n/max(1,unique_customers):.1f}% | {fmoney(rev)} | {100*rev/total_revenue:.1f}% |")

md.append("")

md.append("## 7. Top customers (3-month spend)")

md.append("")

md.append("| Email | Orders | Revenue |")

md.append("|---|--:|--:|")

for e, rev in sorted(cust_rev.items(), key=lambda x:-x[1])[:25]:

md.append(f"| {e} | {cust_orders[e]} | {fmoney(rev)} |")

md.append("")

md.append("## 8. Geographic split (top 25 cities)")

md.append("")

md.append("| City | Orders | Revenue |")

md.append("|---|--:|--:|")

for city, rev in sorted(city_revenue.items(), key=lambda x:-x[1])[:25]:

md.append(f"| {city} | {fnum(city_orders[city])} | {fmoney(rev)} |")

md.append("")

md.append("## 9. Country split")

md.append("")

md.append("| Country | Orders |")

md.append("|---|--:|")

for c, n in country_orders.most_common(15):

md.append(f"| {c} | {fnum(n)} |")

md.append("")

# ---- Audit verdict ----

md.append("## 10. Audit findings & recommendations")

md.append("")

# Find peak hours

sorted_hours = sorted(hour_revenue.items(), key=lambda x:-x[1])

peak_hrs = [h for h,_ in sorted_hours[:4]]

low_hrs = [h for h,_ in sorted_hours[-6:]]

peak_dows = sorted(dow_revenue.items(), key=lambda x:-x[1])[:2]

direct_pct = 100*ch_revenue.get("Direct / Typein",0)/total_revenue if total_revenue else 0

fb_pct = 100*ch_revenue.get("Facebook",0)/total_revenue if total_revenue else 0

google_pct = 100*ch_revenue.get("Google Organic",0)/total_revenue if total_revenue else 0

email_pct = 100*ch_revenue.get("Email",0)/total_revenue if total_revenue else 0

md.append(f"- **Peak hours (UK):** {', '.join(f'{h:02d}:00' for h in sorted(peak_hrs))} — concentrate ad spend & email sends here.")

md.append(f"- **Off-peak hours:** {', '.join(f'{h:02d}:00' for h in sorted(low_hrs))} — pull spend, exclude in dayparting.")

md.append(f"- **Top days:** {', '.join(DOW[i] for i,_ in peak_dows)} drive peak revenue.")

md.append(f"- **Direct/typein share:** {direct_pct:.1f}% — high direct share usually means strong brand or untagged paid traffic. **Audit UTM coverage on every paid link** (this is your biggest attribution gap).")

md.append(f"- **Facebook share:** {fb_pct:.1f}% revenue — your largest *attributable* paid channel. Watch CPL trend weekly; scale winning creatives 20% per week.")

md.append(f"- **Google Organic:** {google_pct:.1f}% revenue — strong brand search. Add a branded Google Ads campaign to capture intent before competitors do.")

md.append(f"- **Email:** {email_pct:.1f}% revenue — Klaviyo is performing. Send during the 18:00–21:00 peak window to ride the natural buying curve.")

google_ads_pct = 100*ch_revenue.get("Google Ads",0)/total_revenue if total_revenue else 0

md.append(f"- **Google Ads:** only {google_ads_pct:.2f}% revenue — either spend is tiny or UTMs are missing from ad URLs. Verify `gclid` is being captured by the order attribution plugin.")

tt_pct = 100*ch_revenue.get("TikTok",0)/total_revenue if total_revenue else 0

md.append(f"- **TikTok:** {tt_pct:.2f}% revenue — under-indexed for a UK competitions audience. Worth a £100/day test with native UGC creative.")

aov_pct_under_2 = 100 * sum(1 for r in rows if r["_total"] < 2) / total_orders

md.append(f"- **{aov_pct_under_2:.1f}% of orders are under £2** (low-ticket entries). Bundle/upsell mechanics will move AOV faster than new acquisition.")

md.append(f"- **Repeat-customer rate:** {100*repeat_orders/total_orders:.1f}% of orders. {(unique_customers - sum(1 for v in cust_orders.values() if v==1))} customers ordered more than once. Email/SMS retention is the highest-ROI lever.")

md.append("")

md.append("---")

md.append(f"*Report generated by audit.py from {fnum(total_orders)} WooCommerce orders.*")

md_text = "\n".join(md)

md_path = os.path.join(OUT_DIR,"AUDIT_REPORT.md")

with open(md_path,"w") as f:

f.write(md_text)

print(f"Wrote {md_path}")

# ---- HTML version ----

try:

import markdown

html_body = markdown.markdown(md_text, extensions=["tables","fenced_code"])

except Exception:

html_body = "" + md_text.replace("<","<") + ""

html = f"""

Nitrous 3-Month Audit

{html_body}"""

html_path = os.path.join(OUT_DIR,"AUDIT_REPORT.html")

with open(html_path,"w") as f:

f.write(html)

print(f"Wrote {html_path}")

# Side CSVs

with open(os.path.join(OUT_DIR,"channels.csv"),"w",newline="") as f:

w = csv.writer(f); w.writerow(["channel","orders","revenue","unique_buyers"])

for ch, rev in sorted(ch_revenue.items(), key=lambda x:-x[1]):

w.writerow([ch, ch_orders[ch], f"{rev:.2f}", len(ch_unique[ch])])

with open(os.path.join(OUT_DIR,"daily.csv"),"w",newline="") as f:

w = csv.writer(f); w.writerow(["date","orders","revenue","new_customers"])

for d in sorted(day_revenue):

w.writerow([d, day_orders[d], f"{day_revenue[d]:.2f}", day_new[d]])

with open(os.path.join(OUT_DIR,"hourly.csv"),"w",newline="") as f:

w = csv.writer(f); w.writerow(["hour","orders","revenue"])

for h in range(24):

w.writerow([h, hour_orders[h], f"{hour_revenue[h]:.2f}"])

print("Wrote channels.csv, daily.csv, hourly.csv")

if __name__ == "__main__":

main()